It’s been over 4 years since I left Twilio but no career experience outside of Runscope has had a bigger impact on me. With the impending Twilio IPO, I’m finding myself especially nostalgic about my time there. I was only there two years, but I learned so much.

Discovering Twilio

In 2008 I was a web developer in the Twin Cities. I ran a big softball league and when it would rain I needed to notify 600+ people that the games were canceled. I used my cell phone’s voicemail as a hotline for awhile but that rendered my phone useless on game days. I thought it was ridiculous that everyone had to ‘poll’ the hotline for updates. I wanted push notifications via phone.

My first attempt at this involved trying to set up Asterisk. Being a Windows user though I didn’t even get past getting a Linux distro set up (kids, learn *nix). I gave up pretty quickly.

One night during the off season I was reading TechCrunch when I came across a post about Twilio. It sounded like the perfect fit. I went to the site, read the docs and a few minutes later my phone was ringing. I don’t know how to describe the feeling I had. I recall running around the house. I think I scared my wife.

I was hooked. In just a few minutes a whole new set of capabilities opened up to me. I was consumed with ideas of telephony apps to build. My eyes were opened to the power of APIs. Stumbling on that TechCrunch post literally changed my life.

From Fanboy to Employee

I started blogging about Twilio, writing sample apps and helper libraries, giving talks at user groups and regularly participating in their weekly IRC office hours where I virtually got to know Danielle Morrill, Jeff Lawson, Evan Cooke and other members of the team. Not too long after, I won the infamous Netbook contest which I was ridiculously proud of. Ironically, when I later took over running the contest I went to the master spreadsheet and found out there was only one other entry that week and it didn’t even work. I didn’t care; a win is a win.

In early 2010 I was in Las Vegas for a trade show for the company I was working for. I saw that Danielle was tweeting about being at a different show there and so we started planning to meet in person for the first time. I was able to leave my show early and go over to the conference center where Twilio was exhibiting. The security guard wouldn’t let me in without a badge. I hung out near the entrance hoping to catch them on the way out but I noticed an exit-only side door people were leaving the show through. When the guard wasn’t looking, I snuck through the door. Conveniently, the Twilio booth was just inside where I found Danielle and told her how I got in much to her amusement (if you know Danielle, you know this is her kind of thing).

We chatted a bit and then she mentioned she wanted to introduce me to Jeff Lawson. When we found him, she introduced me by saying, “Jeff, this is John Sheehan, my first choice for our evangelist job opening.” This was news to me on two fronts: that such a job existed and that I was a candidate for it (I had only previously applied for a PHP developer job I was not qualified for).

Jeff and I chatted and he invited me to dinner with some Twilio customers, investors and other team members. It felt like a dry run of doing an evangelist’s job. After dinner they told me they wanted me to come out to SF for an interview. A few days later, without even visiting, I got an offer that I quickly accepted. I started April 5th, 2010 as Twilio’s first full-time Developer Evangelist and Twilio’s 10th employee.

Being an Evangelist

I didn’t really have a job description. I knew my job was to help bring developers to Twilio by doing the things I was already doing but I didn’t know what success looked like. We ended up mostly focusing on registered developers as our success metric but found the best way to drive that was not to evangelize (UGH STILL HATE THAT WORD) Twilio but to help developers be more successful. Period. Eventually those relationships would benefit the company when it benefited the developer.

I really loved doing the job. I met so many great people, many of which I still consider friends. I got way better at writing and presenting. We were blazing a trail for how small companies could do developer outreach (remember that prior to 2010 most Developer Evangelists were at companies like Microsoft and Sun). The sign ups were piling up and accelerating. It was exhausting and exhilarating. The team was growing and I eventually took it over. Shortly after, a new opportunity within the company emerged that I had to go after.

Working for Danielle

Danielle is the best manager I’ve ever had. No one has ever pushed me harder. She gave me a ton of autonomy and trusted me to do what I thought was best for the company and our community. She made sure I was recognized for my successes and took the fall for my failures.

She knew how competitive I was and used it to push me further. We had a 1–1 meeting once where she told me whoever got the most blog page views that month would win something. I found out the next month (after “winning”) she hadn’t included anyone else on the team in the contest. I was really happy with the results and too impressed with being hoodwinked to be upset.

Danielle is a polarizing figure but is one of the sharpest people I know and someone I will never, ever bet against.

The API Debate

Shortly after I moved to SF to take over the evangelism team we were gearing up for a new product launch. Earlier on in the development the team was having trouble coming up with a friendly API (JavaScript in this case). I made a proposal that got adopted. Having that level of impact on product design from outside the product team on a big new product was a big personal win.

Closer to launch, there were still some details to be worked out on the design. Under the pressure of shipping many people became involved in a debate on a final issue. PMs, devs, support, and even Jeff were trying to work it out. I felt very invested in the outcome considering my earlier contribution and having just moved to SF literally to be more involved in moments like this. It got a little heated and I ultimately ‘lost’. I was pretty devastated. My reaction didn’t sit well with a few people. I stewed a little bit but calmed down and got on with the launch. There was too much to do. Unfortunately this would come up again later.

Becoming a Product Manager

As the product line grew it became difficult for every team to focus on developer experience. The developer console, docs, helper libraries, etc. were starting to suffer a bit from a lack of attention. A new position opened up on the product team to be the Product Manager for developer experience. It felt like a perfect and obvious next step for me given the amount of direct customer feedback I had been exposed to the previous 18 months.

I don’t know what was going on behind the scenes, but it took a long time (around 3 months) to go from expressing interest to getting the job (earlier design debate incident perhaps?). I’m sure it was complicated but it was really frustrating. I didn’t really feel like I had a lot of momentum once the switch was official. My team was small, the projects were unsexy (the ones we wanted to do wouldn’t drive the business) and I was new at being a PM. It didn’t go particularly well.

The Breakdown

While I was failing at being a PM, some new initiatives in the company were being explored that I felt like undermined our developer-friendly ethos. I understood the potential upside but it felt like the company was going through its biggest change yet (change was not uncommon, things felt very different at 10, 20, 50 and 100 people).

As a PM with a backlog a mile long, it was difficult to watch resources get diverted to things I didn’t believe in. I was also a bit burned out from the intensity of the previous 18 months. This all culminated in a meeting with Jeff (my manager at the time) where I broke down and cried over where things were going. I’ve never been more embarrassed in my career. I left the meeting with a sense that my time at Twilio was coming to an end soon.

I don’t blame Jeff or others in the company for pursuing those opportunities. I probably would have done the same in their position. While those projects were ultimately scuttled, I think the company learned a lot. I was really excited the first time I saw the “Ask Your Developer” billboard years later. It felt like a return to the Twilio I loved.

Leaving

I left in March of 2012. The company had gone from 10 to over 100 employees. Our registered developers had gone from single-digit thousands to over 100,000. The evangelism team was around 10 people strong and becoming a machine (Rob and crew have done an amazing job since taking it to over 1M developers).

On my 2nd to last day I accidentally spilled coffee on my laptop and destroyed it.

Quitting was a mixed bag of emotions. I missed the people. I missed the sense of urgency. I missed the prestige that came with being a part of a startup that was making it. But I was also relieved to not be so stressed all the time.

Do I wish I had stayed? The short answer is “no” but I’m definitely experiencing a bit of FOMO right now. I went to Twilio because I truly believed it had a chance to become a successful company. There was always a really strong sense of mission and endless opportunity. It was palpable sometimes. I would have loved to have seen that play out directly, but leaving was still the right choice. In the 4 years since, I’ve been incredibly fortunate. I owe my entire career since to having been at Twilio. I will probably be banking on that for years to come.

I have only one regret: I should have negotiated for more options.

Other Random Memories

Opening the first red track jacket, Jeff waiting on Andrew, missing every company photo, the Montana trip (and subsequent Owl reward), Super Startup Weekend, Epic Sax on a string, the Chicago demo fail, johnsheehanneedsajacket.com, Andre Bempton, Mark’s laugh, Frank taking a stand on ‘presents’, the fake Twitter feedback, 95% Taylor Swift, Bad Romance jam session, 4am Whattaburger, London tube singing guy & Hawksmoor, Twilio’s first “acquisition” via expense report.

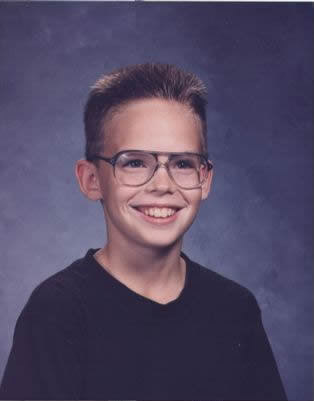

Age has always been a sensitive issue for me. When I was 5 my mom homeschooled me and when she couldn’t do it anymore and it was time to go to public school I was effectively done with first grade so she bumped me ahead to second grade. The rest of my primary and secondary school years I trailed all my classmates in age by a year. I got my license my junior year, graduated at 17, etc. I played sports on teams consisting of all older players. I was always very determined to prove that my age didn’t matter.

Age has always been a sensitive issue for me. When I was 5 my mom homeschooled me and when she couldn’t do it anymore and it was time to go to public school I was effectively done with first grade so she bumped me ahead to second grade. The rest of my primary and secondary school years I trailed all my classmates in age by a year. I got my license my junior year, graduated at 17, etc. I played sports on teams consisting of all older players. I was always very determined to prove that my age didn’t matter.